|

Thruput is measured in the indexing pipeline. Thruput messages (similar to the English word "throughput") come in a few varieties.

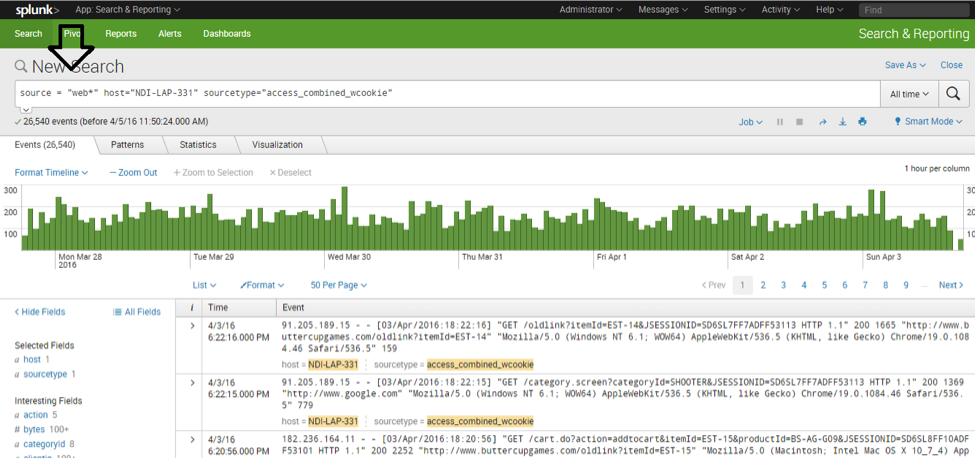

Generally, filled_count and empty_count cannot be productively used for inferences. Each networking output also has its own queue, which can be useful to determine whether the data is able to be sent promptly, or alternatively whether there's some network or receiving system limitation. There are queues in place for data going into the parsing pipeline, and for data between parsing and indexing. If you want to look at the queue data in aggregate, graphing the average of current_size is probably a good starting point. A few scattered blocked messages indicate that flow control is operating, and is normal for a busy indexer. Many blocked queue messages in a sequence indicate that data is not flowing at all for some reason. A queue becomes unblocked as soon as the code pulling items out of it pulls an item. This message contains the blocked string, indicating that it was full, and someone tried to add more, and couldn't. group=queue, name=parsingqueue, blocked!!=true, max_size=1000, filled_count=0, empty_count=8, current_size=0, largest_size=2, smallest_size=0 If the queues remain near 1000, then more data is being fed into the system (at the time) than it can process in total. If current_size remains near zero, then probably the indexing system is not being taxed in any way.

But current_size, especially considered in aggregate, across events, can tell you which portions of Splunk indexing are the bottlenecks. Most of these values are not interesting.

group=queue, name=parsingqueue, max_size=1000, filled_count=0, empty_count=8, current_size=0, largest_size=2, smallest_size=0 Read more about Splunk's data pipeline in " How data moves through Splunk" in the Distributed Deployment Manual. This might indicate that many of your events are multiline and are being combined in the aggregator before being passed along. Then it's pretty clear that a large portion of your items aren't making it past the aggregator. Group=pipeline, name=merging, processor=sendout. Group=pipeline, name=merging, processor=regexreplacement. Group=pipeline, name=merging, processor=readerin. Group=pipeline, name=merging, processor=aggregator. Looking at numbers for executes can give you an idea of data flow. Plotting totals of cpu seconds by processor can show you where the cpu time is going in indexing activity.

You can see how many times data reached a given machine in the Splunk system ( executes), and you can see how much cpu time each machine used ( cpu_seconds). Pipeline messages are reports on the Splunk pipelines, which are the strung-together pieces of "machinery" that process and manipulate events flowing into and out of the Splunk system. There are a few groups in the file, including: This indicates what kind of metrics data it is. You can change that number of series from the default by editing the value of maxseries in the stanza in nf.Ġ1-27-2010 15:43:54.913 INFO Metrics - group=pipeline, name=parsing, processor=utf8, cpu_seconds=0.000000, executes=66, cumulative_hits=301958įirst, boiler plate: the timestamp, the "severity," which is always INFO for metrics events, and then the kind of event, " Metrics." Metrics.log has a variety of introspection information for reviewing product behavior.įirst, metrics.log is a periodic report, taken every 30 seconds or so, of recent Splunk software activity.īy default, metrics.log reports the top 10 results for each type.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed